What Everyone's Talking About

Check out our updates on Search Engine Optimisation, Social Media Marketing,

PPC Management Content Marketing, Facebook Advertising, e-mail Marketing etc.

Posted on: Jul 12, 2018 12 min read

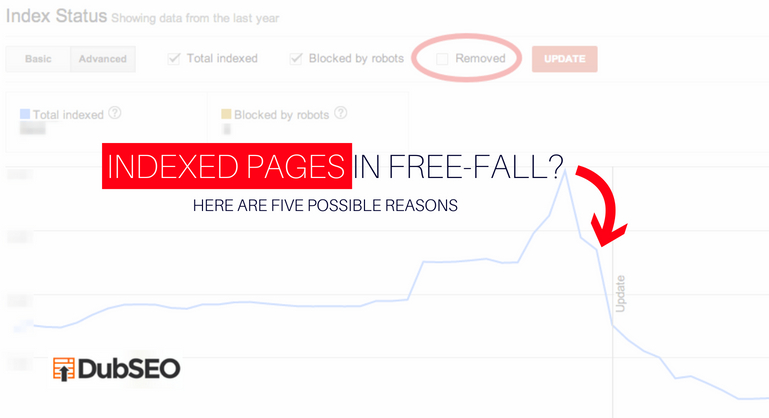

Your Webpages need to be indexed by Google etc. for visibility and growth. Pages that are not indexed will not rank.

So how do you keep track of how many indexed pages you have?

Each one will offer different results, but there is a reason the quantity of your indexed pages that Google reports.

If webpages are not being indexed, it could mean that Google doesn’t like the page or might find it difficult to crawl.If your indexed-pages count does begin to go down, this could be due to:

Here are some hints of how you can discover what the issue is and and correct the numbers of indexed pages that are decreasing.

Ensure that they have proper 200 HTTP Header Status.

Has the server experienced frequent or lengthy downtime? Has your domain expire drecently or was the renewal late?

Action

Use a free HTTP Header Status tool for checking to find out whether the right status is showing. For very big sites, tools for crawling tools like Screaming Frog,Deep Crawl, Botify, or Xenu can help in testing for this.

The header status you need is 200. Sometimes some 3xx, except the 301, 4xx, or 5xx errors may be seen – not good news when you want your URLs indexed.

Sometimes changing CMS, server settings or backend programming a change in a folder, in a domain or a subdomain, may have a knock on effect and alter URLs on your site.

Old URLs,may still be remembered by Search engines and the problem is that failing to redirect properly, will mean many pages will, as a result, become un-indexed.

Action

It may be possible to visit a copy of the previous site so that you can get details of all the old URLs so that you can go back point the relevant redirects to the URLs.They relate to.

To fix duplicate content will often involve the implementation of canonical tags, noindex meta tags, 301 redirects, or robot.txt disallows. Any of these scenarios may mean a fall off in URL’s indexed.

In this instance, decreasing indexed pages may actually not be a bad thing.

Action

As this can be beneficial for your site, just check that this is the actual reason for the fall off of the indexed pages and that it is not due to any other issue.

On some servers there are bandwidth restrictions due to the cost with higher bandwidths; if this is the case then you will need to upgrade the servers. Sometimes, issues might be hardware related, solved by an upgrade to hardware processing or your memory limitations.

On some sites IP addresses will be blocked when a visitor accesses, at higher frequency, too many pages. This setting represents a strict way of avoiding any attempt at DDOS hacking but might also result in a bad impact on your website.

This will typically be picked up at the second setting of a page. If thresholds are not high enough, then search-engine bot crawling might hit the low meaning that the bots will not be able crawl properly on the site.

Choose your market place. Download the campaign template you want to use. A custom build that has exact match keywords and ad copy converting with high click-through rates.

Action

If your issues are being caused by server bandwidth limitation, then it is time for a service upgrade.

If server processing or memory issues are the problem, apart from hardware upgrading check if there is server-caching tech of any sort in place, the server will be under less stress.

If there is in place any anti-DDOS software, relax the settings or whitelist Googlebot so that it is never blocked at anytime. Look out for some Googlebots that may be fakethat are out there and be sure you detect googlebot correctly. For Detecting Bingbot use the same procedure.

Sometimes search engine spiders see things differently than we do.

Some developers will site-build inways they prefer without realising the implications for SEO.

Sometimes, an out-of-the-box CMS is used without a prior check of how search engine friendly it is.

Sometimes, this might be done purposely in an attempt to produce content cloaking, in other words, in an attempt to ‘game’ search engines.

At other times, a website may have been hacked, who will cause a page that is completely different to be visible to Google in order to cloak the ‘301 redirections’or promote their own hidden-links or for the purposes of diverting them to their own sites.

Action

Use the Google Search Console fetch and render feature to ascertain if Googlebot is in fact seeing the exact same content as you can see.

Try translating your page with Google Translate. You could also check Google’s Cached page, although there are ways around these that will still allow content to be cloaked behind them.

(KPIs) which is short for Key Performance Indicators are what will help evaluate how well any SEO campaign does, and will often centre on ranking and organic search traffic. KPIs will normally focus on business goals, tied to income.

Increases in the number of indexed pages might increase the available numbers of keywords that can be ranked and that will result in a higher profit margin. However, focusing on indexed pages will simply be to gauge if the search engines can crawl and index your web pages correctly.

Always remember that your webpages cannot rank when a search engine cannot see them index them or crawl them.

Mostly, a fall off in indexing of pages is not good thing but fixing any duplicate,thin or low-quality content may also mean a decrease in number of pages indexed, and that, in fact, is actually good.

Educate yourself on how to carry out evaluation of your website starting with these 5 possible explanations that we have prepared which will give you an idea of why your indexed pages are not performing as they should.